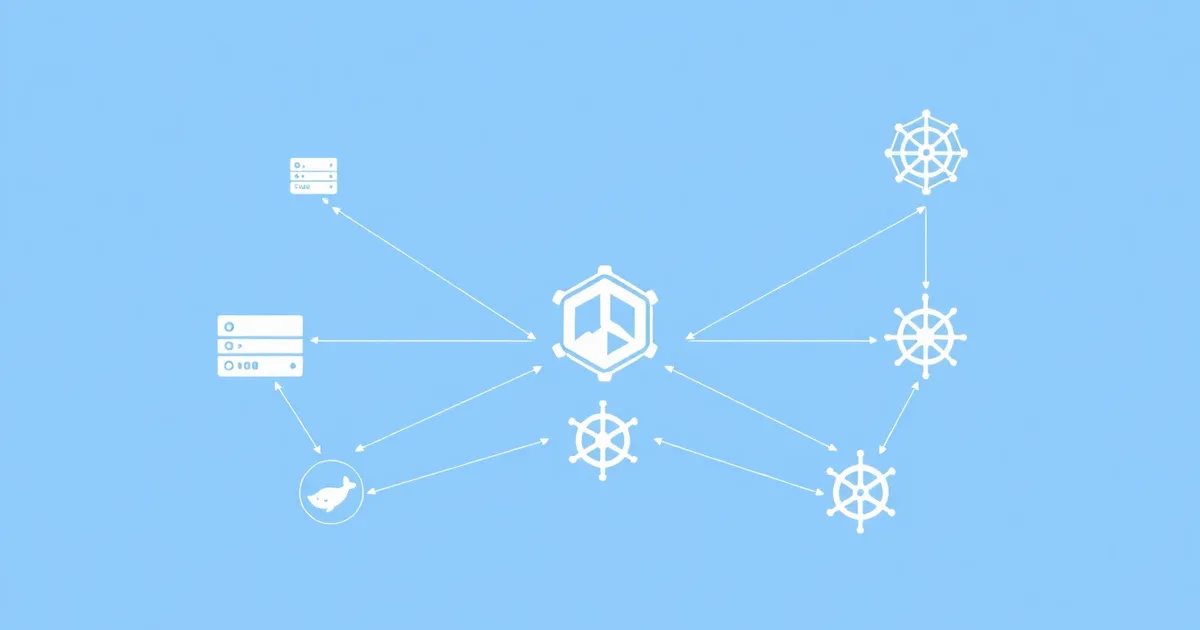

Container orchestration platforms have become the backbone of modern DevOps infrastructure, with organizations deploying increasingly complex containerized applications at scale. In 2026, the landscape offers mature solutions like Kubernetes and Docker Swarm alongside emerging alternatives that cater to specific use cases. Container orchestration platforms automate the deployment, scaling, and management of containerized applications, reducing operational overhead while improving reliability and resource utilization.

Key Takeaways:

- Kubernetes dominates with 87% market share but requires significant expertise

- Docker Swarm offers simplicity for smaller deployments with built-in Docker integration

- Alternative platforms like Nomad and OpenShift provide specialized features for specific requirements

- Cloud-native solutions from AWS, Azure, and GCP reduce management complexity

- Consider team expertise, scalability needs, and budget when choosing platforms

What Are Container Orchestration Platforms and Why Do You Need Them?

Container orchestration platforms are software systems that automate the deployment, management, scaling, and networking of containerized applications across clusters of machines. These platforms solve critical challenges that emerge when running containers in production environments.

Without orchestration, managing containers manually becomes impractical beyond small-scale deployments. These platforms provide essential capabilities including automated scaling, service discovery, load balancing, rolling updates, and fault tolerance.

The benefits extend beyond basic container management. Modern orchestration platforms integrate with CI/CD pipelines, monitoring systems, and security tools, creating comprehensive DevOps ecosystems that streamline application lifecycle management.

How Does Kubernetes Compare to Other Container Orchestration Platforms in 2026?

Kubernetes maintains its position as the leading container orchestration platform in 2026, powering over 87% of containerized workloads according to the Cloud Native Computing Foundation's annual survey. Its extensive ecosystem, community support, and feature richness make it the default choice for large-scale deployments.

Key Kubernetes advantages include:

- Declarative configuration: Define desired state through YAML manifests

- Extensive ecosystem: Thousands of operators, tools, and integrations

- Multi-cloud portability: Run consistently across different cloud providers

- Advanced scheduling: Sophisticated workload placement and resource optimization

- Robust security: Role-based access control, network policies, and secrets management

However, Kubernetes complexity remains a significant challenge. Organizations often require dedicated platform engineering teams and substantial training investments. The learning curve can delay time-to-market for teams new to container orchestration.

Kubernetes Managed Services vs Self-Managed Clusters

Managed Kubernetes services like Amazon EKS, Google GKE, and Azure AKS have matured significantly by 2026. These platforms handle control plane management, security patches, and cluster upgrades, reducing operational burden while maintaining Kubernetes' full feature set.

Self-managed Kubernetes clusters offer complete control but require expertise in cluster lifecycle management, security hardening, and troubleshooting. Organizations with specific compliance requirements or unique infrastructure needs often choose this approach.

What Makes Docker Swarm a Viable Alternative for Container Orchestration?

Docker Swarm provides a simpler alternative to Kubernetes, offering built-in orchestration capabilities within the Docker ecosystem. While less feature-rich than Kubernetes, Swarm excels in scenarios requiring quick deployment and minimal operational complexity.

Docker Swarm's primary strengths include:

- Simplified setup: Initialize clusters with single commands

- Native Docker integration: Use familiar Docker Compose syntax

- Lower resource overhead: Minimal infrastructure requirements

- Built-in service discovery: Automatic load balancing and networking

- Rolling updates: Zero-downtime deployments with simple configuration

Organizations choosing Docker Swarm typically have smaller-scale deployments, limited DevOps expertise, or requirements for rapid prototyping. The platform works particularly well for teams already comfortable with Docker who need orchestration without Kubernetes' complexity.

Docker Swarm Limitations and Considerations

While Docker Swarm offers simplicity, it lacks advanced features available in Kubernetes. The ecosystem is significantly smaller, with fewer third-party tools and integrations. Advanced networking, storage orchestration, and complex deployment patterns require workarounds or external tools.

Scaling limitations become apparent in large deployments. Swarm's scheduling capabilities, while adequate for most use cases, cannot match Kubernetes' sophisticated algorithms for resource optimization and workload placement.

Which Alternative Container Orchestration Platforms Should You Consider?

Beyond Kubernetes and Docker Swarm, several specialized platforms offer unique advantages for specific use cases. These alternatives have gained traction in 2026 as organizations seek solutions tailored to their particular requirements.

HashiCorp Nomad

Nomad provides multi-workload orchestration, supporting containers, VMs, and standalone applications within a single platform. Its lightweight architecture and operational simplicity appeal to organizations managing diverse workloads.

Nomad excels in:

- Multi-workload support: Orchestrate containers alongside legacy applications

- Edge computing: Lightweight footprint suitable for resource-constrained environments

- HashiCorp integration: Seamless integration with Vault, Consul, and Terraform

- Operational simplicity: Single binary deployment with minimal configuration

Red Hat OpenShift

OpenShift builds upon Kubernetes with enterprise-focused features including developer tools, security enhancements, and operational automation. It provides a complete container platform rather than just orchestration.

OpenShift advantages include:

- Developer experience: Integrated CI/CD pipelines and source-to-image builds

- Enterprise security: Built-in security scanning and compliance tools

- Multi-tenancy: Project-based isolation and resource quotas

- Hybrid cloud support: Consistent experience across on-premises and cloud environments

Apache Mesos with Marathon

While less popular in 2026, Mesos remains relevant for organizations requiring fine-grained resource sharing between different frameworks. Marathon provides container orchestration on top of Mesos' resource management layer.

| Platform | Best For | Learning Curve | Ecosystem Size | Enterprise Support |

|---|---|---|---|---|

| Kubernetes | Large-scale, complex deployments | High | Very Large | Excellent |

| Docker Swarm | Simple deployments, small teams | Low | Small | Limited |

| Nomad | Multi-workload, edge computing | Medium | Medium | Good |

| OpenShift | Enterprise Kubernetes | High | Large | Excellent |

| Mesos/Marathon | Resource sharing, mixed workloads | Very High | Small | Limited |

How Do Cloud-Native Container Services Compare to Self-Managed Solutions?

Cloud providers have significantly enhanced their container orchestration offerings by 2026, providing fully managed services that reduce operational complexity while maintaining platform capabilities.

Amazon ECS and EKS lead AWS's container strategy, with ECS offering a proprietary orchestration layer optimized for AWS services, while EKS provides managed Kubernetes. Both integrate deeply with AWS ecosystem components like IAM, VPC, and CloudWatch.

Google Cloud Run and GKE emphasize serverless and managed approaches respectively. Cloud Run abstracts away infrastructure entirely for stateless applications, while GKE provides advanced Kubernetes features like Autopilot mode for hands-off cluster management.

Azure Container Instances and AKS offer similar managed experiences with strong integration into Microsoft's enterprise ecosystem. Azure's focus on hybrid scenarios appeals to organizations with on-premises infrastructure requirements.

Cost Considerations for Cloud vs Self-Managed

Managed services typically cost 20-30% more than self-managed infrastructure but eliminate operational overhead. Organizations must factor in the hidden costs of self-management: specialized expertise, monitoring tools, security updates, and disaster recovery planning.

Small to medium organizations often find managed services more cost-effective when considering total cost of ownership. Large enterprises with existing expertise may prefer self-managed solutions for cost optimization and customization capabilities.

What Factors Should Guide Your Container Orchestration Platform Choice?

Selecting the right container orchestration platform requires careful evaluation of technical requirements, organizational constraints, and long-term strategic goals. The decision impacts development velocity, operational complexity, and infrastructure costs for years.

Team Expertise and Learning Investment

Assess your team's current container and DevOps expertise honestly. Kubernetes offers the most comprehensive features but requires significant learning investment. Teams new to containers may benefit from starting with Docker Swarm or managed cloud services before transitioning to more complex platforms.

Consider the availability of training resources and community support. Platforms like AppBull often feature discounted training courses and development tools that can accelerate team onboarding and reduce learning costs.

Scalability and Performance Requirements

Evaluate current and projected workload characteristics:

- Application scale: Number of services, containers, and nodes

- Traffic patterns: Steady state vs bursty workloads

- Resource requirements: CPU, memory, and storage demands

- Geographic distribution: Multi-region deployment needs

Small to medium deployments (under 100 containers) can often use simpler solutions like Docker Swarm effectively. Large-scale deployments benefit from Kubernetes' advanced scheduling and resource optimization capabilities.

Integration and Ecosystem Requirements

Consider how container orchestration integrates with existing tools and workflows. Organizations heavily invested in specific cloud providers may prefer native container services. Those using automated testing frameworks and API monitoring solutions need platforms with compatible integrations.

Evaluate requirements for:

- CI/CD integration: Pipeline tools and deployment workflows

- Monitoring and logging: Observability platform compatibility

- Security tools: Vulnerability scanning and policy enforcement

- Storage systems: Persistent volume and backup solutions

What Are the Best Practices for Container Orchestration Platform Implementation?

Successful container orchestration platform adoption requires careful planning, phased implementation, and attention to operational practices. These best practices help organizations avoid common pitfalls and maximize platform benefits.

Start with Development and Testing Environments

Begin container orchestration adoption in non-production environments to build expertise and identify potential issues. This approach allows teams to experiment with platform features, develop operational procedures, and create automation scripts without impacting critical workloads.

Use development environments to establish:

- Container image standards: Base images, security scanning, and tagging conventions

- Resource limits: CPU and memory allocations for different application types

- Networking policies: Service communication and security boundaries

- Backup and recovery procedures: Data persistence and disaster recovery plans

Implement Comprehensive Monitoring and Observability

Container orchestration platforms introduce additional complexity that requires robust monitoring. Implement observability solutions that provide visibility into application performance, infrastructure health, and platform operations.

Essential monitoring components include:

- Infrastructure metrics: Node health, resource utilization, and network performance

- Application metrics: Service availability, response times, and error rates

- Platform events: Deployment status, scaling events, and configuration changes

- Security events: Access attempts, policy violations, and vulnerability alerts

Establish GitOps and Infrastructure as Code Practices

Treat container orchestration configuration as code, storing all manifests, policies, and infrastructure definitions in version control. This approach enables reproducible deployments, audit trails, and collaborative development practices.

GitOps workflows automatically sync cluster state with repository contents, reducing manual configuration drift and improving deployment reliability. Popular tools like ArgoCD and Flux provide robust GitOps capabilities for Kubernetes environments.

How Can You Optimize Costs When Using Container Orchestration Platforms?

Container orchestration platforms can significantly reduce infrastructure costs through improved resource utilization, but poor configuration and management practices can lead to unexpected expenses. Implementing cost optimization strategies helps organizations maximize return on investment.

Right-Sizing and Resource Management

Proper resource allocation prevents both resource starvation and waste. Use historical performance data to establish appropriate CPU and memory requests and limits for each application component.

Implement resource management best practices:

- Resource requests: Guarantee minimum resources for reliable performance

- Resource limits: Prevent applications from consuming excessive resources

- Quality of Service classes: Prioritize critical workloads during resource contention

- Horizontal Pod Autoscaling: Automatically adjust replica counts based on demand

Cluster Optimization and Multi-Tenancy

Optimize cluster density by consolidating workloads and implementing effective multi-tenancy strategies. Use node affinity rules, taints, and tolerations to optimize workload placement and resource utilization.

Consider implementing:

- Mixed instance types: Use appropriate compute resources for different workload characteristics

- Spot instances: Leverage discounted compute for fault-tolerant workloads

- Cluster autoscaling: Automatically adjust cluster size based on demand

- Resource quotas: Prevent individual teams or applications from consuming excessive resources

When implementing cost optimization strategies, consider leveraging tools and automation solutions available through platforms like AppBull, which often feature specialized container management and optimization tools at discounted rates.

What Security Considerations Are Critical for Container Orchestration?

Container orchestration platforms introduce unique security challenges that require specialized approaches and tools. Security must be integrated throughout the container lifecycle, from image creation to runtime protection.

Container Image Security

Secure container images form the foundation of orchestration platform security. Implement comprehensive image scanning, vulnerability management, and supply chain security practices.

Key image security practices include:

- Base image selection: Use minimal, regularly updated base images

- Vulnerability scanning: Scan images for known security vulnerabilities

- Image signing: Verify image authenticity and integrity

- Registry security: Secure image storage and access controls

Runtime Security and Network Policies

Runtime security focuses on protecting applications and infrastructure during container execution. Implement network policies, pod security standards, and runtime monitoring to detect and prevent security incidents.

Essential runtime security components include:

- Network policies: Control traffic flow between services and external systems

- Pod security policies: Enforce security contexts and capabilities

- Service mesh: Implement mutual TLS and traffic encryption

- Runtime monitoring: Detect anomalous behavior and potential threats

Integration with existing security tools and workflows becomes crucial for organizations with established security practices. Consider how container orchestration platforms integrate with database security tools and other critical infrastructure components.

Future Trends in Container Orchestration Platforms

The container orchestration landscape continues evolving rapidly, with new technologies and approaches emerging to address current limitations and enable new use cases. Understanding these trends helps organizations make informed long-term platform decisions.

WebAssembly (WASM) integration represents a significant trend, with platforms beginning to support WebAssembly modules alongside traditional containers. This capability enables faster cold starts, improved security isolation, and more efficient resource utilization for specific workload types.

Edge computing optimization drives development of lightweight orchestration solutions suitable for resource-constrained environments. Platforms are implementing edge-specific features like intermittent connectivity handling, local data processing, and minimal resource footprints.

AI/ML workload optimization becomes increasingly important as organizations deploy machine learning models at scale. Container orchestration platforms are adding GPU scheduling, model serving capabilities, and integration with ML pipeline tools.

The integration of container orchestration with modern development workflows continues deepening. Organizations benefit from comprehensive toolchains that span from code repository management to production deployment and monitoring.

Practical Implementation Checklist for Container Orchestration

Successfully implementing container orchestration requires systematic planning and execution. This checklist provides actionable steps for organizations beginning their container orchestration journey.

Pre-Implementation Assessment

- Assess current infrastructure: Document existing applications, dependencies, and infrastructure

- Evaluate team skills: Identify training needs and expertise gaps

- Define success criteria: Establish measurable goals for the implementation

- Choose pilot applications: Select suitable applications for initial migration

- Plan resource allocation: Budget for infrastructure, tools, and training

Platform Selection and Setup

- Compare platform options: Evaluate based on requirements and constraints

- Set up development environment: Create safe space for learning and experimentation

- Install monitoring tools: Implement observability before deploying applications

- Configure security policies: Establish baseline security configurations

- Create deployment procedures: Document standard operating procedures

Application Migration and Optimization

- Containerize pilot applications: Create container images following best practices

- Deploy to testing environment: Validate functionality and performance

- Implement CI/CD integration: Automate build and deployment processes

- Perform load testing: Validate scaling and performance characteristics

- Plan production migration: Develop rollback procedures and migration timeline

Organizations should also consider leveraging specialized project management tools to coordinate complex container orchestration implementations and ensure successful delivery.

Container orchestration platforms have matured significantly by 2026, offering robust solutions for organizations of all sizes. Kubernetes remains the dominant choice for complex, large-scale deployments, while Docker Swarm provides an accessible entry point for smaller teams. Alternative platforms like Nomad and OpenShift address specific requirements, and cloud-native services reduce operational complexity. Success depends on carefully evaluating organizational needs, technical requirements, and team capabilities. The investment in proper platform selection, implementation planning, and team training pays dividends through improved development velocity, operational efficiency, and application reliability. As containerized workloads become increasingly critical to business operations, choosing the right orchestration platform becomes a strategic decision that impacts long-term technical capabilities and competitive advantage.